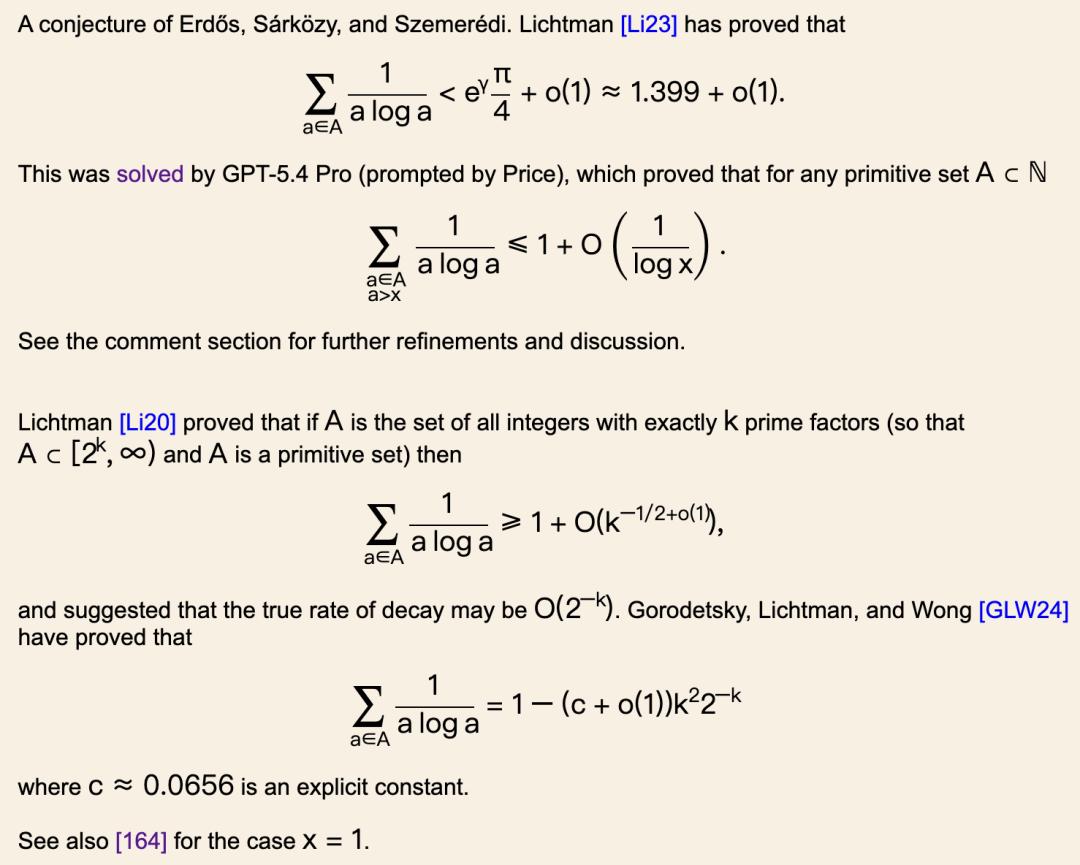

Amateur Solves 60-Year-Old Math Conjecture

A 23-year-old amateur mathematician has surprisingly cracked a 60-year-old conjecture that has puzzled the math community for decades. Liam Price, who has no formal training in higher mathematics, utilized ChatGPT to solve this complex problem.

After reviewing the proof, renowned mathematician Terence Tao remarked, “For the past 60 years, humanity has approached this problem incorrectly from the very first step.”

The Journey of Liam Price

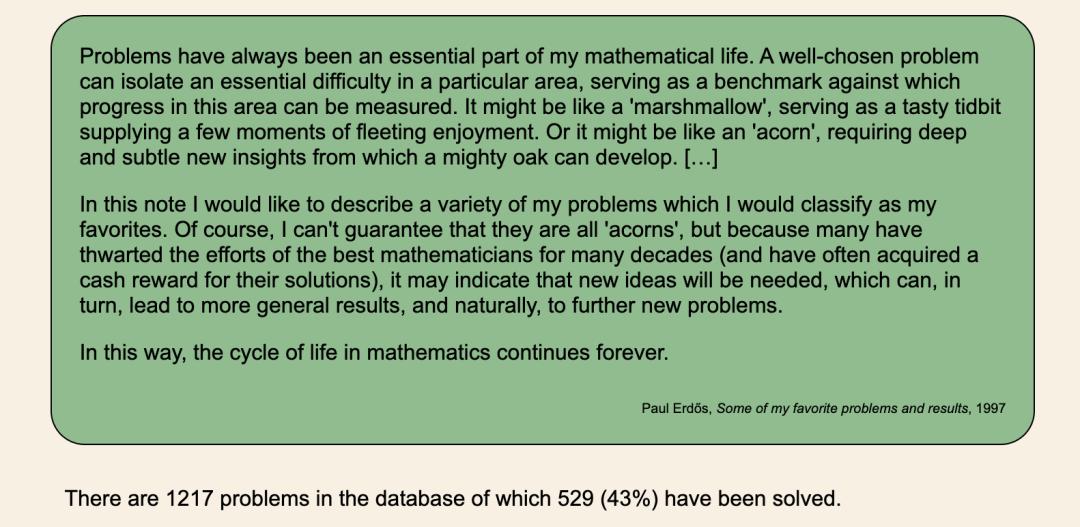

Liam Price is not a traditional math student; he lacks any higher mathematics degree. However, in late 2025, he teamed up with Kevin Barreto, a second-year student from the University of Cambridge, to embark on an unconventional experiment. They randomly selected unsolved problems from the famous Erdős Problems website and fed them directly to ChatGPT without any prior research or reading of related papers.

This method, dubbed “vibe mathing,” relies solely on intuition and simple language to describe problems, allowing the AI to find solutions independently.

Prior to tackling Erdős Problem #1196, Price and Barreto had made progress on several smaller issues using this method, garnering some attention. OpenAI, upon hearing about their work, provided them with a ChatGPT Pro subscription to encourage further exploration. This investment proved to be one of the most rewarding in the history of mathematics in 2026.

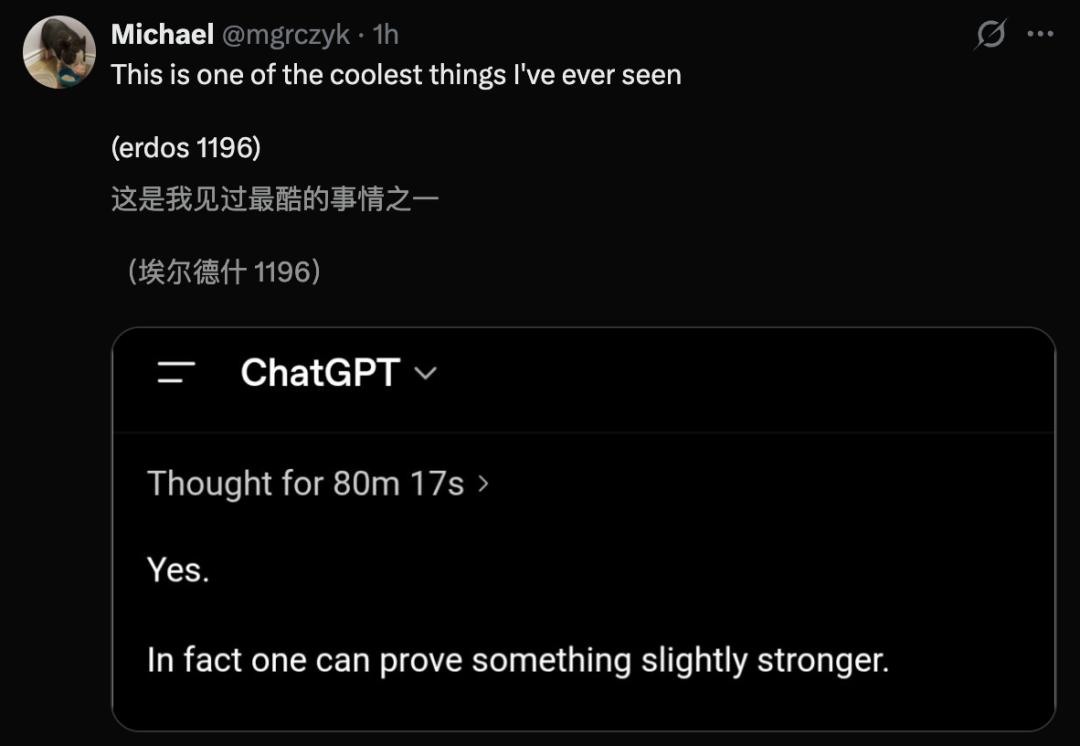

The Breakthrough in 80 Minutes

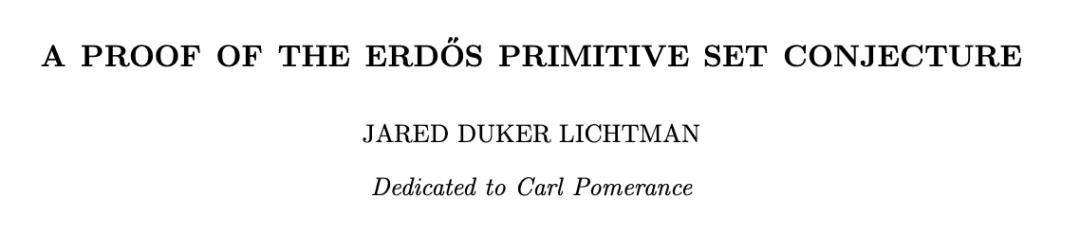

The furthest any human mathematician had reached on this problem was Jared Lichtman from Oxford University, who spent seven years developing important papers that pushed the known upper limit to approximately 1.399.

However, when Price sent his prompt to ChatGPT, the GPT-5.4 Pro processed the information for just 80 minutes before delivering a solution: an asymptotic result of 1 + O(1/log x).

To clarify, a “primitive set” is a collection of positive integers where no element divides another. For example, {2, 3, 7, 12} is not a primitive set because 12 is divisible by 2 and 3, whereas {2, 3, 7, 11} is.

In 1968, Erdős and collaborators Sárközy and Szemerédi proposed a conjecture regarding a specific summation involving primitive sets, suggesting that there exists a clear upper bound in an asymptotic sense. This conjecture had remained unresolved for 58 years.

The critical difference was not just the speed of the solution but the approach taken. All previous mathematicians, including Lichtman, had defaulted to using tools from analytic number theory, which had confined their thinking to a narrow path.

In contrast, GPT-5.4 Pro took an entirely different route, employing Markov chain methods combined with von Mangoldt weights. These tools are well-established in other branches of number theory but had never been applied to the primitive set problem.

Interestingly, Price noted in an interview with Scientific American that the initial output from GPT was “actually of poor quality.” The proof was lengthy and chaotic, with logical leaps evident throughout. It was Barreto and later experts who sifted through the disorganized deductions to identify the key new insight.

Lichtman’s assessment was cautious yet significant: “This requires experts to filter it to truly understand what it is trying to express.”

He further stated something that silenced the entire community: “This is the first AI mathematical result that reaches the level of Erdős’s book.” The “Erdős book” refers to a metaphor Erdős used, suggesting that God has a book containing the most elegant proofs of every mathematical theorem.

Lichtman’s implication was that AI not only solved the problem but that the solution itself was beautiful.

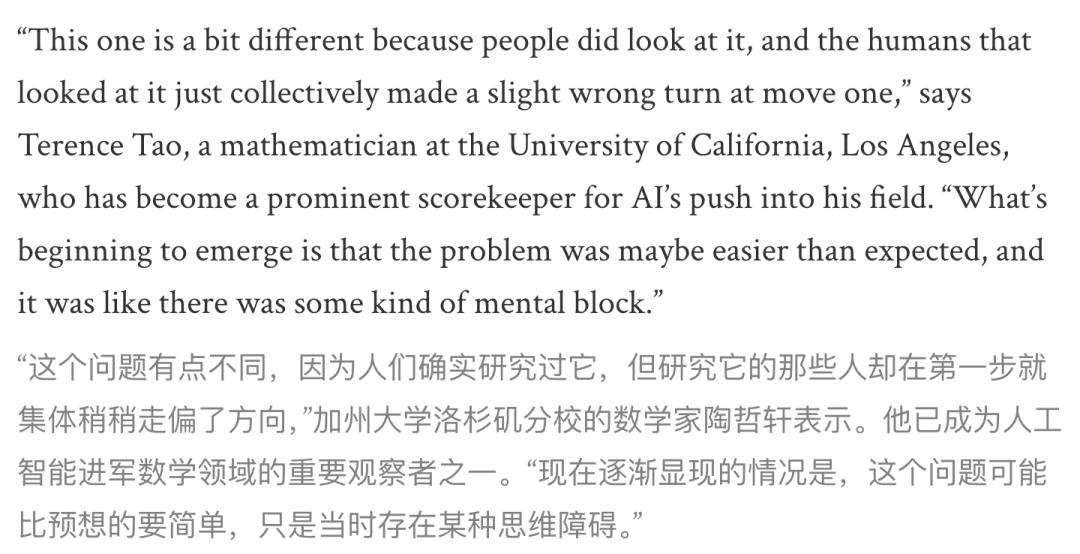

Terence Tao’s Reflection

Fields Medalist Terence Tao’s commentary prompted deep reflection within the community. He stated that previous researchers often adopted a standard approach from the outset. In contrast, the large language model (LLM) took an entirely different route, applying a formula known in related mathematical branches but never considered for this type of problem.

This “collective misstep” has roots in the standard pathway established since 1935, where number theory problems were translated into probability theory, following the line of the Mertens theorem, which everyone assumed was correct.

Generations of graduate students learned this translation method first before adding more details.

GPT-5.4 Pro had never learned this “tradition.” Instead, it directly utilized the von Mangoldt function—an object that encodes the fundamental theorem of arithmetic in analytic number theory—taking a completely different path.

Lichtman later explained that this formula is familiar to everyone in the relevant mathematical fields, but no one had thought to apply it to the Erdős problem.

Tao’s conclusion was even more striking: “We have discovered a new way of thinking about large integers and their structures.”

A person who spent seven years studying Lichtman’s problem lost to an amateur who did not know how this problem “should be studied.” In the AI era, ignorance has become a structural advantage; without historical baggage, one can avoid following the collective missteps.

A New Key in Mathematics

In 1900, David Hilbert presented 23 problems at the International Congress of Mathematicians in Paris, defining the direction of mathematics throughout the 20th century. At that time, fewer than a few hundred people worldwide could touch the frontier of mathematics.

On a Monday afternoon in April 2026, a 23-year-old man, with a single prompt, achieved what many could not for decades in just 80 minutes. The door to mathematics has not been lowered, but a new key has been added. Those holding this key no longer need to spend ten years learning all the detours taken by their predecessors.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.